Natural Language Process

Have you ever asked a question to a virtual assistant or received a remarkably accurate translation from an online tool and paused to wonder, “How does the machine understand me?” The answer lies in the sophisticated field of Natural Language Processing (NLP). At its core, NLP is the branch of artificial intelligence that gives machines the ability to read, decipher, understand, and make sense of human language in a valuable way. It is the foundational technology bridging the gap between human communication and computer understanding, transforming unstructured text and speech into structured data that machines can act upon. This capability has moved from a niche academic pursuit to a central driver of modern technology, revolutionizing everything from how we search the web to how businesses understand their customers. In this deep dive, we will unravel the evolution of this field, explore the key techniques that make it work, examine its profound real-world applications, and confront the critical challenges and ethical considerations that shape its responsible development. The journey of natural language processing is one of the most significant in modern computing, and its impact is only beginning to be felt.

Table of Contents

The Foundational Evolution of Natural Language Processing

The story of NLP is a compelling narrative of ambition, limitation, and breakthrough. Its evolution mirrors the broader trajectory of AI itself, moving from rigid, rule-based systems to the dynamic, data-driven models of today. The earliest efforts in the 1950s, like the Georgetown-IBM experiment in machine translation, were symbolic and heuristic. Researchers painstakingly hand-coded linguistic rules—grammar, syntax, and limited vocabulary—into systems. These rule-based approaches, while pioneering, were brittle. They failed spectacularly when confronted with the ambiguity, nuance, and sheer irregularity of natural human language. A system programmed with a fixed rule couldn’t parse a sentence it hadn’t been explicitly taught to handle.

The paradigm shift began with the rise of statistical methods in the late 1980s and 1990s. Instead of relying solely on linguist-defined rules, researchers began using machine learning algorithms to find probabilistic patterns in large corpora of text. This was the era where hidden Markov models powered early speech recognition systems and statistical machine translation (like Google’s early Translate) began to show promise. The key insight was that meaning could be inferred from context and frequency. However, these models still relied heavily on human-engineered features.

The true revolution arrived with the deep learning wave and the advent of neural networks, particularly models like Word2Vec (2013) and the subsequent transformer architecture (2017). These models, especially the transformer, enabled a leap in language understanding. They could process words in relation to all other words in a sentence (self-attention), capturing context with unprecedented accuracy. This led directly to the development of large language models (LLMs) like GPT (Generative Pre-trained Transformer) and BERT (Bidirectional Encoder Representations from Transformers). Pre-trained on vast swathes of the internet, these models develop a deep, contextual understanding of language, which can then be fine-tuned for specific tasks. The evolution from rules to statistics to neural networks marks NLP’s journey from a tool that could manipulate language to one that can genuinely begin to comprehend it. For a historical perspective on this AI journey, the Encyclopædia Britannica provides excellent context.

NLP in Action: Everyday Applications You Already Use

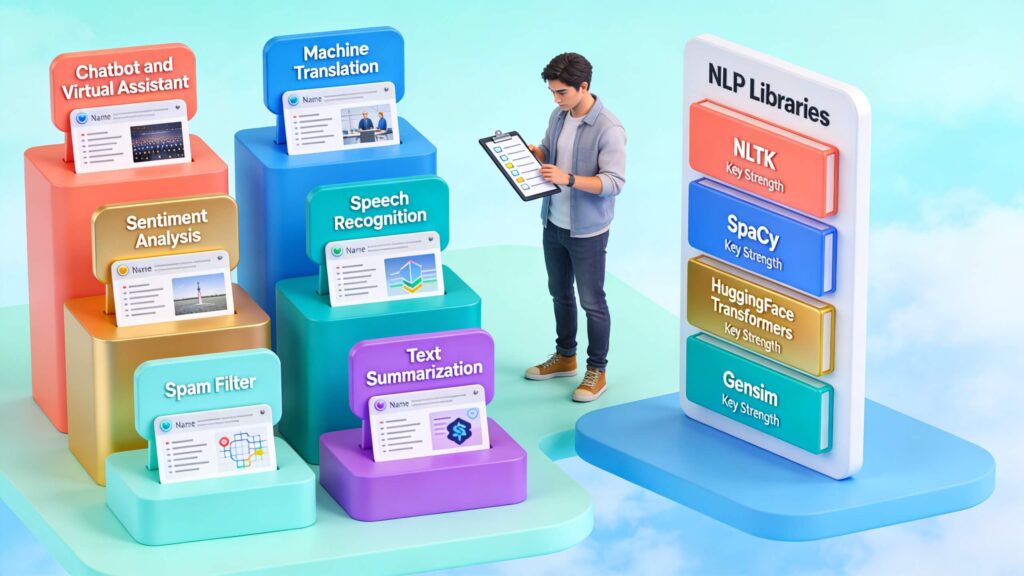

Far from being a distant, futuristic concept, NLP is deeply embedded in the fabric of daily digital life. Its applications are so seamless that we often use them without a second thought. When you speak a command to Amazon’s Alexa or Apple’s Siri, automatic speech recognition (ASR) converts your audio to text, and an NLP model parses the intent and entities within your query to execute an action or fetch an answer. The search engine you use, whether Google or Bing, employs sophisticated natural language processing algorithms to move beyond simple keyword matching. They interpret search intent, understand semantic relationships between words, and rank pages based on contextual relevance and quality, a process detailed by resources like Google Search Central.

Your email inbox uses NLP for spam filtering, classifying messages based on their content and metadata. The grammar and spell-checking tools in your word processor are powered by NLP models that understand syntax and style. On social media platforms, NLP drives content recommendation algorithms, suggests hashtags, and, more controversially, performs sentiment analysis and content moderation. Customer service has been transformed by NLP-powered chatbots and virtual agents that can handle routine inquiries, freeing human agents for complex issues. Perhaps one of the most visible applications is real-time machine translation, as seen in tools like Google Translate or Skype Translator, which allow for near-instantaneous cross-lingual communication. These are not speculative technologies; they are mature, operational systems that demonstrate the practical power of computational linguistics to enhance human productivity and connection.

Defining the Core: What is Natural Language Processing?

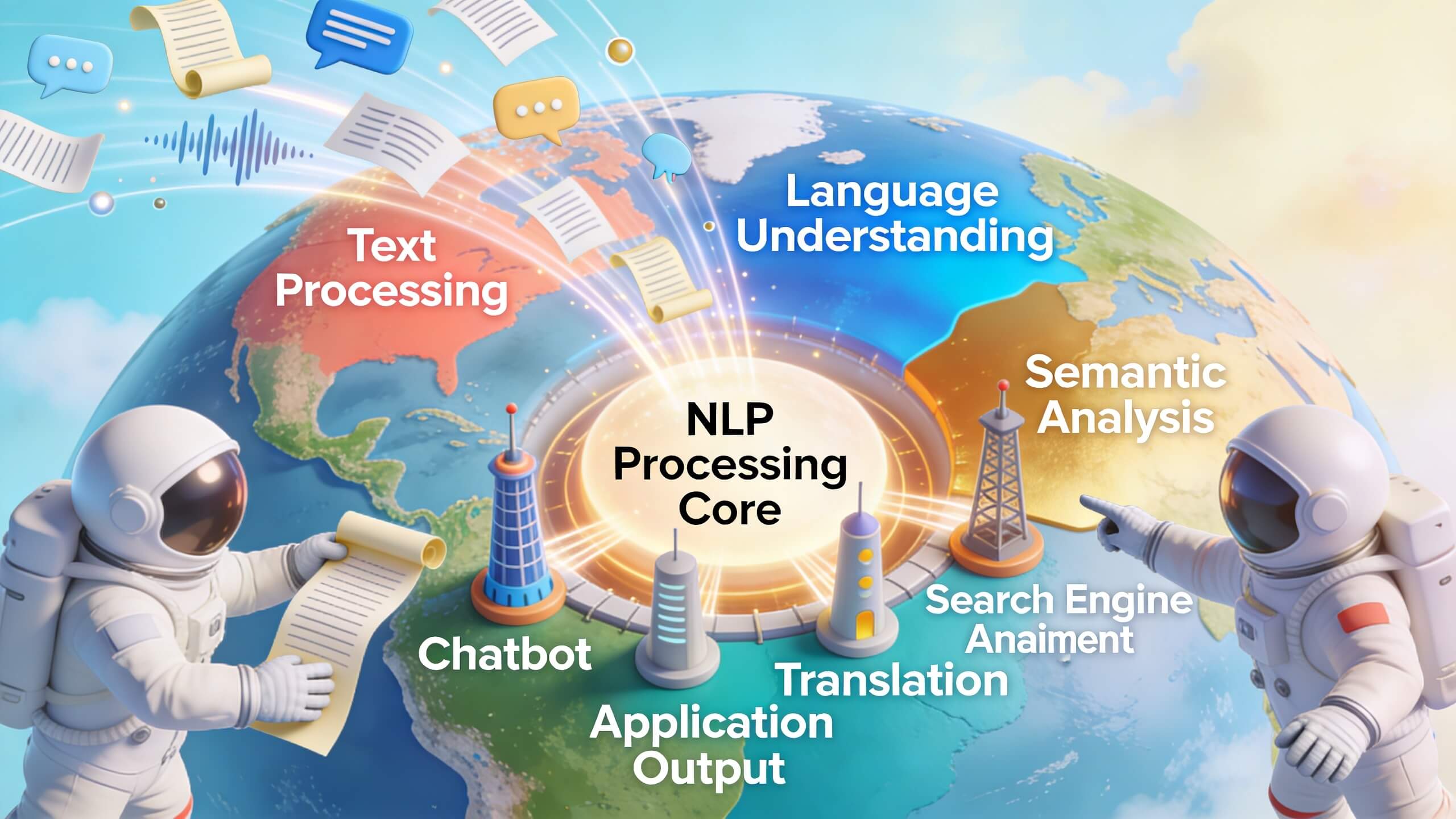

To solidify understanding, let’s define Natural Language Processing in a featured-snippet-ready format. NLP is a multidisciplinary field at the intersection of computer science, artificial intelligence, and linguistics. Its primary goal is to enable computers to process, analyze, and generate human language in a way that is both meaningful and useful. This involves a pipeline of tasks: starting with low-level syntactic analysis (like tokenization and part-of-speech tagging), moving to semantic analysis (extracting meaning and relationships), and culminating in pragmatic application (dialogue management, text generation). The ultimate benchmark for NLP is passing the Turing Test—creating a system whose conversational output is indistinguishable from that of a human—a goal that remains elusive but toward which the field is making staggering progress through advanced language models.

Deconstructing the Engine: Key Techniques and Models

The magic of NLP is built upon a suite of interconnected techniques and architectural innovations. Understanding these is key to appreciating how machines parse language.

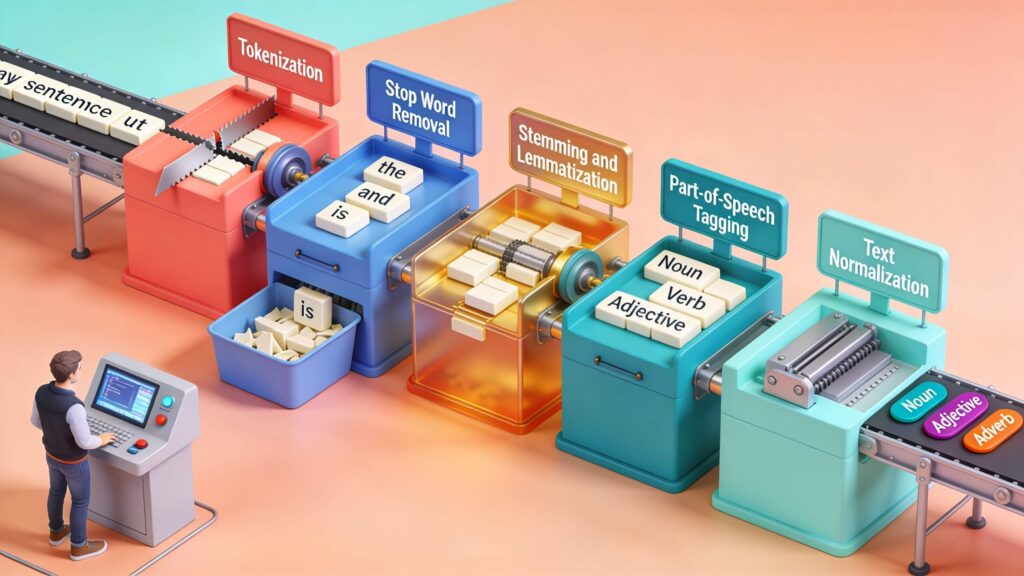

Fundamental Techniques: The workflow often begins with tokenization (breaking text into words or sub-words) and part-of-speech tagging (labeling words as nouns, verbs, etc.). Named Entity Recognition (NER) is crucial for identifying and classifying key information like person names, organizations, locations, and dates within text. Sentiment analysis gauges the emotional tone—positive, negative, neutral—behind a body of text. Topic modeling algorithms, such as Latent Dirichlet Allocation (LDA), can automatically discover abstract “topics” that occur in a collection of documents.

The Neural Network Revolution: While the above techniques can be implemented with various methods, the current state-of-the-art is dominated by neural networks. Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks were breakthroughs for handling sequential data like text, as they could maintain a “memory” of previous words. However, the transformer architecture, introduced in the seminal paper “Attention Is All You Need,” was the game-changer. Its self-attention mechanism allows the model to weigh the importance of all words in a sentence simultaneously when processing any single word, enabling a far richer understanding of context.

Large Language Models (LLMs): This architecture enabled the creation of LLMs. Models like OpenAI’s GPT series are trained on a self-supervised objective—predicting the next word in a sequence across a vast corpus (often hundreds of billions of words from the internet). Through this process, they internalize grammar, facts, reasoning abilities, and even style. These pre-trained models are then fine-tuned on smaller, task-specific datasets to become expert summarizers, translators, or code generators. The power of these models lies in their generality and emergent abilities, a topic actively researched by institutions like Stanford AI Lab.

The Inherent Hurdles: Core Challenges in NLP

Despite phenomenal progress, NLP systems still grapple with fundamental challenges rooted in the complexity of human language. Ambiguity is the foremost obstacle. Lexical ambiguity (a word like “bank” meaning a financial institution or a river edge) and syntactic ambiguity (e.g., “I saw the man with the telescope”) require deep world knowledge and context to resolve. Sarcasm, irony, and humor are particularly thorny, as they often rely on saying the opposite of the literal meaning, dependent on cultural and situational context.

Another major challenge is the handling of rare words, out-of-vocabulary terms, and the dynamic nature of language where new slang and neologisms constantly emerge. Furthermore, the data used to train these models often contains societal biases. An NLP model trained on historical internet text can inadvertently learn and amplify gender, racial, or socioeconomic prejudices present in the data, leading to biased outputs in hiring tools, loan applications, or law enforcement risk assessments. This makes fairness and debiasing a critical area of research, as highlighted by work from the Partnership on AI. Finally, the resource intensity of training massive LLMs raises concerns about environmental sustainability and accessibility, potentially centralizing this powerful technology in the hands of a few large corporations with vast computational resources.

Transforming Enterprise: NLP in Business and Industry

The business value of NLP is immense, as it turns unstructured text data—which constitutes over 80% of enterprise data—into actionable intelligence. In customer experience, NLP powers sentiment analysis on reviews and social media, allowing companies to gauge brand perception in real-time. Chatbots and virtual agents automate tier-1 support, reducing costs and wait times. In sales and marketing, NLP tools can analyze call transcripts to coach representatives, generate personalized content, and identify sales leads from news articles.

The finance sector uses NLP for algorithmic trading by analyzing news sentiment and earnings reports, for fraud detection by monitoring unusual patterns in communication, and for risk assessment by parsing complex legal and regulatory documents. In healthcare, a field with its own specialized lexicon, NLP is revolutionizing clinical documentation, extracting patient information from doctor’s notes, powering diagnostic support systems by parsing medical literature, and enabling more efficient clinical trial matching. Human Resources leverages NLP to screen resumes, reduce bias in job descriptions, and analyze employee feedback from surveys. The following table illustrates the cross-industry impact:

| Industry | Primary NLP Applications |

|---|---|

| Customer Service | Sentiment analysis, chatbots, call center analytics, email routing. |

| Finance | Algorithmic trading signals, fraud detection, contract analysis, compliance monitoring. |

| Healthcare | Clinical documentation support, medical literature mining, patient data extraction, pharmacovigilance. |

| Legal | E-discovery (document review), contract analysis and generation, legal research. |

| Media & Publishing | Content recommendation, automated summarization, trend detection, personalized news feeds. |

For businesses looking to implement these technologies, understanding data governance and model management is critical, areas covered by frameworks from ISO standards on AI.

Case in Point: The IBM Watson Journey

A seminal case study in enterprise NLP is IBM Watson. Famously winning Jeopardy! in 2011, Watson showcased an early, powerful form of question-answering and natural language understanding. IBM pivoted this technology toward enterprise solutions, focusing on specific industries like healthcare, finance, and customer service. Watson’s NLP capabilities were used to help oncologists by ingesting and analyzing vast volumes of medical research to suggest treatment options, a powerful demonstration of information extraction. In customer care, Watson Assistant became a leading platform for building sophisticated, context-aware virtual agents. While the journey had its challenges, including the high complexity and cost of implementation, Watson’s evolution highlights key lessons: the importance of domain-specific tuning (a general model is rarely sufficient for expert tasks), the need for explainable AI in high-stakes fields like medicine, and the shift from a single “brain” to a suite of composable AI services. It underscored that the value of natural language processing is not in creating a general intelligence, but in building reliable, specialized tools that augment human expertise.

On the Horizon: Future Trends and Directions

The frontier of NLP is moving at a breathtaking pace. Several key trends are defining its future. First is the continued scaling of multimodal models. Systems like OpenAI’s DALL-E and GPT-4V are not purely NLP; they understand and generate content across modalities—text, images, and eventually audio and video—within a single model. This leads to more robust, human-like understanding, as concepts are grounded in multiple forms of sensory data.

Second is the push toward greater efficiency and accessibility. The computational cost of training and running LLMs is driving research into model compression, distillation (training smaller models to mimic larger ones), and more efficient architectures. This democratization, alongside open-source initiatives, will broaden access. Third, retrieval-augmented generation (RAG) is becoming a standard architecture. Instead of relying solely on a model’s internal knowledge (which can be outdated or hallucinated), RAG systems retrieve relevant information from external, trusted knowledge bases in real-time and use it to ground their responses, dramatically improving accuracy and reducing fabrication.

Finally, the focus on alignment and safety is intensifying. As models become more capable, ensuring they are helpful, honest, and harmless (the “HHH” framework) is paramount. Research into constitutional AI, where models are trained to follow a set of core principles, and advanced reinforcement learning from human feedback (RLHF) are central to this effort. The goal is to build systems that are not just intelligent, but also trustworthy and aligned with human values, a complex endeavor explored by researchers at Google DeepMind and other labs.

The Imperative of Ethics in NLP Development

The power of NLP brings profound ethical responsibilities that the industry must confront head-on. Bias and Fairness remain the most pressing issue. Since models learn from human-generated data, they perpetuate and can amplify societal biases. Debiasing requires careful curation of training datasets, algorithmic techniques to identify and mitigate bias, and continuous auditing of model outputs in deployment. Privacy is another critical concern. NLP models trained on personal communications, emails, or social media posts risk memorizing and potentially leaking sensitive personal information. Techniques like differential privacy and federated learning are being explored to train models without exposing raw data.

Transparency and Explainability are essential for trust, especially in high-stakes domains like healthcare or criminal justice. The “black box” nature of deep learning models makes it difficult to understand why they made a particular decision. Developing interpretable models and explanation interfaces is an active research area. Furthermore, the potential for misuse in generating misinformation, deepfake text, or automated phishing campaigns at scale is real. This necessitates the development of robust detection tools and perhaps even cryptographic provenance standards for AI-generated content. Ultimately, ethical natural language processing requires a proactive framework, integrating ethicists, social scientists, and domain experts into the development lifecycle, guided by principles from organizations like the ACM Code of Ethics.

Conclusion

The journey through the world of Natural Language Processing reveals a field that has evolved from a linguistic curiosity to a foundational technology shaping the human-digital interface. We have traced its path from rigid rule-based systems to the dynamic, context-aware large language models of today, seen its silent integration into our daily applications, and deconstructed the key techniques that power this quiet revolution. The business implications are vast, turning unstructured text into a strategic asset across every vertical, from healthcare to finance. Yet, this power is tempered by significant technical challenges—ambiguity, bias, and resource demands—and even more critical ethical imperatives concerning fairness, privacy, and safety. The future points toward more multimodal, efficient, and grounded systems, but their success will hinge on our collective commitment to responsible innovation. For businesses, the message is clear: understanding and strategically implementing NLP is no longer optional; it is a core competency for the data-driven age. The potential to enhance customer understanding, automate complex analysis, and unlock new forms of creativity is at your fingertips. The question is no longer if machines can understand our language, but how wisely we will guide them to use that understanding. To explore how these transformative natural language processing capabilities can be tailored to drive your specific business objectives and digital strategy, consult with our experts today and begin architecting your intelligent communication future.